Problem Statement

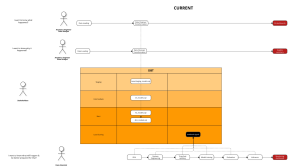

Briefly, we work with a company and they allow their customer to sign up for account. The company has so many branches, and one customer can open one (or more) account(s) at any branch. As a result, duplication happens, so here we are.

When signing up, we need this kind of information from a customer:

- first name

- last name

- date of birth

- phone number

Sample data:

| first name | last name | date of birth | phone number | |

|---|---|---|---|---|

| Ana | Laurel | ana_laurel@yahoo.com | 02/01/1990 | 3102105770 |

Approaches:

- Hard coding: compare each line with other, and check:

- if they exactly match → a match

- otherwise → a distinct

- Dedupe: active learning from user labelling.

- loss function: affine gap

- model: regularized logistic regression

- results

- Research new approach

- data: labelled data pairs (match pairs and distinct pairs, 40 and 460 respectively)

- idea:

- the data has 5 terms and 2 of them (name (including first and last) and email) are critical information that can be used by features such as vectorizing it (convert word to vector) to train an ML model.

- date of birth and phone number are numeric values, and can be used as well.

- steps:

- clean data

- replace NaN value in date of birth with “01-01-1800”

- remove “-” and convert to integer (“01-01-1800” → 01011800)

- replace NaN value in phone number with “99999999999”

- Vectorize:

- use

TfidfVectorizerlibrary ofsklearn - the final feature has shape of

40 * 198for match and460 * 198for distinct (quite imbalanced)

- use

- Train model and Test model: as the test data is not available right now, models are trained and tested using the same data.

- Logistic Regression:

Recall: 0.8 Precision: 0.9831932773109244

- Linear SVM:

Recall: 0.8 Precision: 0.9831932773109244

- XGBoost:

Recall: 1.0 Precision: 1.0

- Logistic Regression:

- Comments:

- XGboost seems to be overfitting, which is understandable because the dataset is small and not balanced. We can check and see if it is overfitting or not once we have a test set.

- Logistic Regression and SVM look promising. We might increase the performance by increasing the data size and feature size.

- clean data

- Reference: Colab Notebook

Lead Analytics Engineer at Joon Solutions Global

Data Analyst | Business Intelligence | BI Consultant

Latest posts by Na Nguyen Thi (see all)

- Common pitfalls in ML projects and how to avoid them. - January 5, 2024

- Data Observability with Elementary - November 5, 2022

- Data Deduplication with ML - November 2, 2022